What Is Agentic AI? A Plain-English Guide

You keep hearing "agentic AI" everywhere. Every tech blog, every startup. But what does it actually mean — and more importantly, what does it mean for you, your work, and the tools you use?

Here's the short version.

Agentic AI is AI that can figure out what to do on its own, use tools to get it done, and keep going until it reaches your goal. No hand-holding required.

A regular AI model is like a fast intern who answers the question you ask. An agentic AI is like a reliable employee who gets a goal, makes a plan, and executes it. You check in at the end.

What does that actually look like?

Let's say you want to research a competitor. A normal AI would answer: "Here's a summary of Competitor X based on their website."

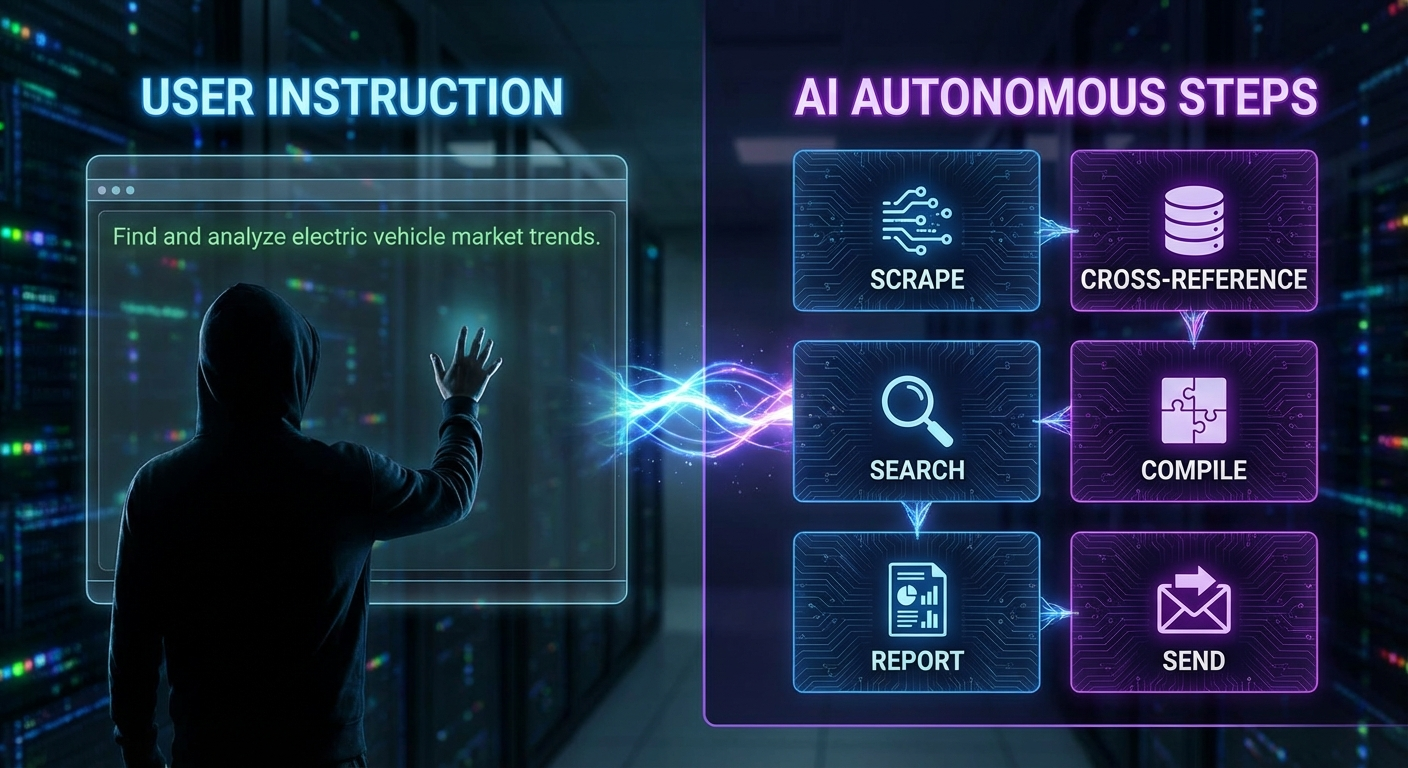

An agentic AI would:

- Scrape Competitor X's website for pricing, features, and blog posts

- Cross-reference with LinkedIn to find their team size and recent hires

- Search for news articles and press releases about them

- Look at job postings to see what they're building next

- Compile all of that into a structured report

- Send you a summary via Slack or email

All of that, autonomously. You gave it one instruction: "Research Competitor X." It handled the rest.

Why this matters now

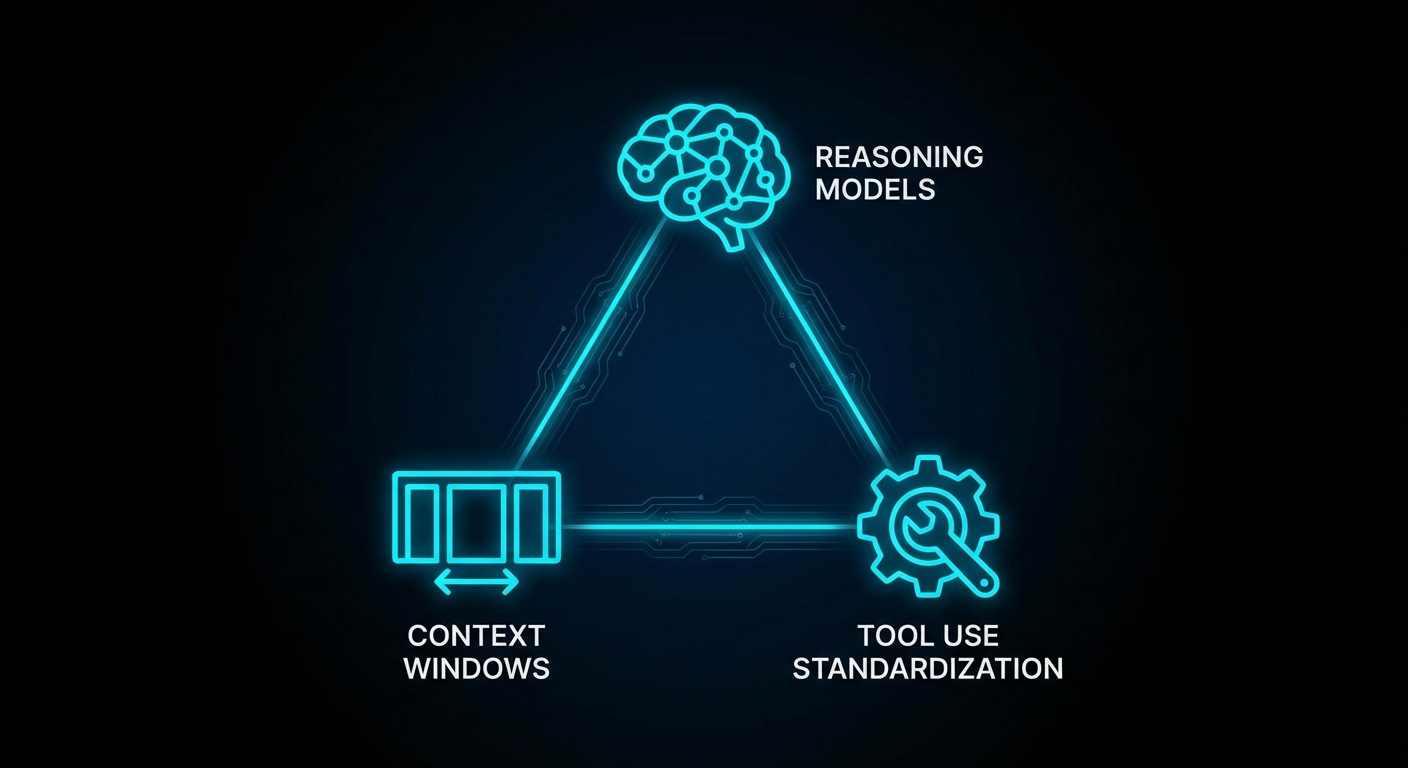

Three things converged in early 2026 to make agentic AI viable for everyday use:

- Reasoning models got good enough. Models like Claude Opus 4, GPT-4.5, and Gemini 2.0 can plan, reason, and course-correct without constant prompting.

- Context windows exploded. 1M token context windows mean an agent can hold an entire codebase, years of emails, or a company's documentation in memory at once.

- Tool use got standardized. Protocols like MCP (Model Context Protocol) gave AI a universal way to connect to browsers, databases, calendars, code editors, and APIs without custom integration work.

The security question everyone asks first

This is the right question to ask. If an AI can send emails, run code, and access your files, what stops it from doing something it shouldn't?

The answer: sandboxing, permissions, and audit logs. Tools like NemoClaw (which wraps OpenClaw in a sandboxed environment with policy-based security controls) are built specifically for this. You define what your agent can and cannot do. You can review its actions. You can pull the plug anytime.

Is it perfect? No. The ecosystem is still young and best practices are evolving. But the building blocks exist to run agentic AI responsibly.

What can you actually use it for today?

Here are the use cases we see from the community that actually ship:

- Research automation: Competitive analysis, market sizing, job posting monitoring, news tracking

- Code review and deployment: Autonomous code review, PR automation, CI/CD pipeline management

- Content operations: Automated newsletter production, blog post research and drafting, social media scheduling

- Security operations: 24/7 log monitoring, incident response automation, threat intelligence aggregation

- Personal productivity: Email triage, calendar management, meeting prep from notes

The honest downside

Agentic AI is not magic. It's not a self-running business in a box. The current state of the art has real limitations:

- It can drift. Give an agent a long-running goal and it may find unexpected, sometimes counterproductive paths to "solve" it.

- Cost management is tricky. Autonomous reasoning loops can burn through API credits fast if you're not careful.

- It needs guardrails. Without constraints, an agent will try to do everything, including things you didn't intend.

- It's still new. Debugging an autonomous agent is harder than debugging a script. Intent, reasoning, and tool selection can all fail in interesting ways.

All that said, for well-scoped, reversible tasks with clear success criteria, agentic AI is genuinely powerful. The key is starting small, building confidence, and expanding scope as you learn what works.

Where OpenClaw fits in

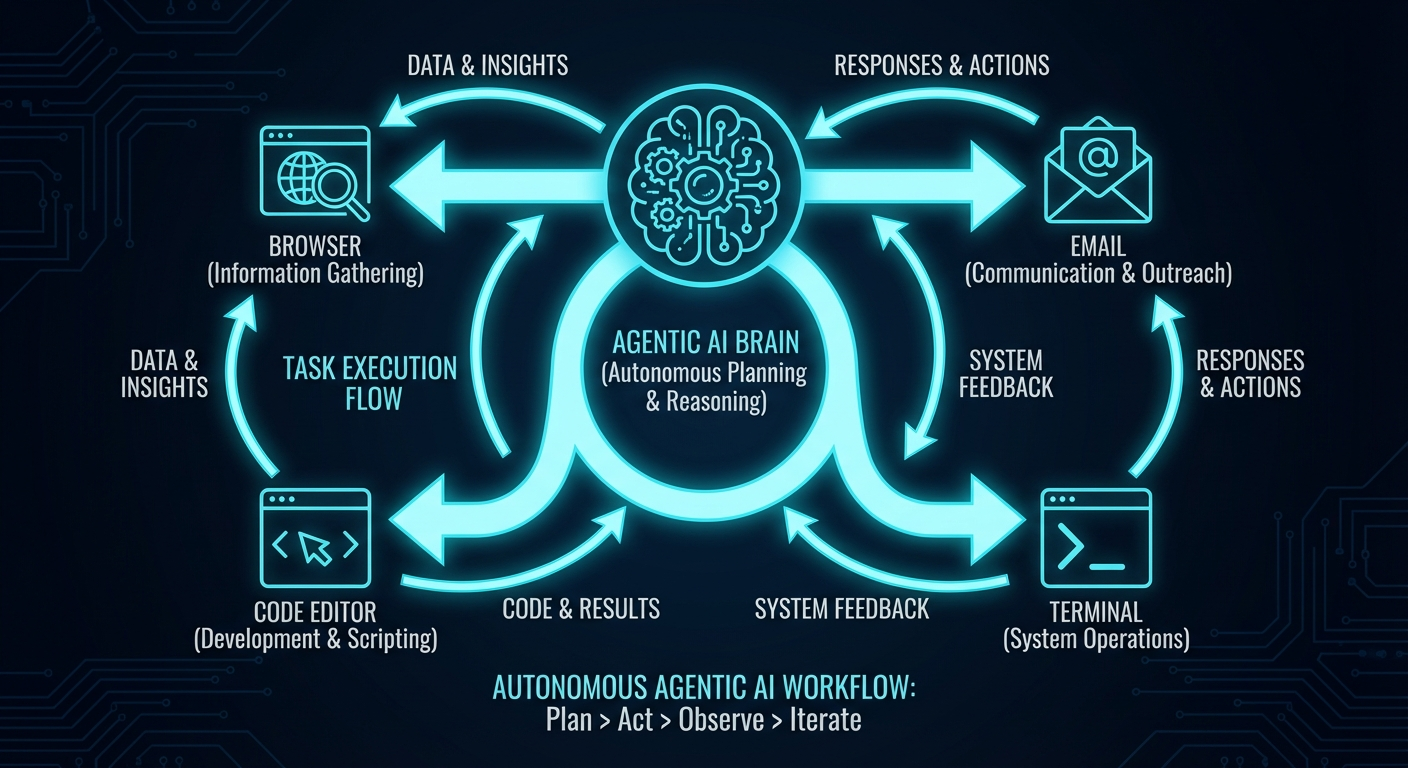

OpenClaw is an open-source framework that lets you run an AI agent on your own machine. Your laptop, a VPS, anywhere. It connects to language models, gives them tool access (browsers, code editors, terminals, messaging apps), and lets you define what your agent can do.

The point is simple: get the power of an agentic AI without handing over control of your data or infrastructure. Your agent runs where you run it. Your data stays where your data stays.

The bottom line

Agentic AI is a shift from "AI answers questions" to "AI gets things done." For developers, builders, and knowledge workers willing to spend a few hours learning the ropes, it's a real leverage point.

For everyone else, the next 12 months will see these tools get dramatically easier to use. The gap between "technically possible" and "actually usable by a normal person" is closing fast.

Want a hands-on way to get started? Download the free OpenClaw Starter Kit — a one-command sandbox that gets a secure agent running in under 5 minutes.